While testing a biometric authentication process, we onboarded a Hollywood celebrity into a client's biometrically authenticated application.

The app was designed to onboard only real users, requiring identity verification with documents and liveness detection. But our client wanted to know whether these safeguards would hold up against deepfakes.

They didn't.

Our team passed AI-generated content through the system, successfully onboarding an A-list celeb.

Funny, yes. But also terrifying.

More than 1 in 3 companies use biometric authentication and customer onboarding systems like the one we hacked as their primary method for verifying users.

Many of these systems protect extremely sensitive data, yet the likelihood is that only a small minority of companies that deploy them are fully testing the biometric authentication systems they rely on.

At SECFORCE, we have the in-house expertise (check out our “LLM-Goat” project) to deliver realistic biometric and AI/LLM Testing solutions.

In this article, we hack into the brain of SECFORCE’s biometric testing expert, Lorenzo Vogelsang, to understand how biometric systems are tested and compromised, and the real threats they face in 2026.

Biometric Attacks Are Very Different From the Attacks Organisations Usually Test Against

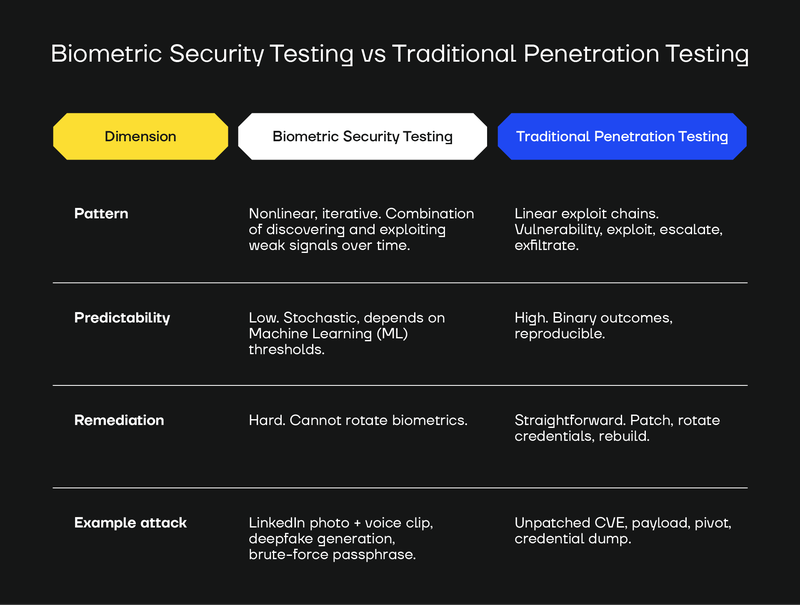

Biometric authentication testing is not the same as "normal" penetration testing or red teaming because biometric attacks don't happen like normal cyberattacks.

A "traditional" penetration test is a relatively predictable technical process.

Attackers find and exploit vulnerabilities in applications based on their code, configurations, and other programmatic weak points.

Biometric security testing is much more "off-piste" because biometric attacks themselves are much more varied. A biometric security test involves a mix of mobile device hacking, audio and video manipulation, synthetic media tooling, and, highly important, creative thinking.

In the table below, we compare biometric testing with traditional penetration testing.

As a further example of how biometric compromise happens, consider this (fictional) attack chain:

- An attacker finds a target on LinkedIn (let's say an executive at a company that uses biometric authentication for high-value transfers).

- They locate a podcast episode where the target spoke.

- They pull the target’s profile photo from the company website.

- Using this raw material, the attacker generates a cloned voice and animates the photo into a video. They practise the authentication passphrase, repeating it thousands of times with slight variations in pacing, pitch, and inflection.

Eventually, one attempt scores above the detection threshold built into the application's biometric authentication model. Biometric authentication works on confidence scoring: if an attempt exceeds a set threshold (say, 70%), the system treats the user as legitimate.

The attack succeeds.

Biometric Authentication Systems Face 4 Unique Security Challenges

From a hacker wearing a realistic rubber mask to a speaker repeating "my voice is my password" a hundred times, biometric systems face some very unique attack vectors.

There are four unique biometric threat types we've seen in the wild and replicated in testing.

1. Voice authentication bypass

Cloned or AI-generated voices built from just a few seconds of audio routinely fool biometric authentication systems.

We've convinced authentication systems to accept voices ranging from fully synthetic speech, and we've also bypassed them with audio replayed through speakers, truncated challenge phrases, and static phrases looped on repeat.

2. Deepfake videos

When we test biometric systems, we often find that AI-generated face videos are accepted as authentic. Shockingly, this happens even when we use watermarked synthetic video from commercial tools.

Without liveness detection (requiring users to move, blink, or complete active challenges), most systems will accept even basic deepfakes as genuine.

3. Injected media and input bypass

One of the biggest surprises for our clients is how easily attackers can hijack device inputs.

We've used virtual cameras and microphones to stream synthetic media directly into apps to find that the system accepts it as if it came from real hardware.

4. Payload tampering

Attackers can also tamper with data payloads to change who the system thinks the user is after authentication has nominally succeeded. That's how we got one authentication system to recognise an AI-generated deepfake as a legitimate user.

Testing Biometric Authentication Means Testing for 3 Attack Vectors In Particular

There are many different ways to break into a biometrically authenticated application.

If your organisation is about to deploy (or has already deployed) a biometric authentication system, there are three specific vectors worth testing against: presentation of inputs, injection of inputs, and the integrity of the data flowing in and out of the app.

Here's how SECFORCE tests biometric authentication across each of these attack vectors.

1. Presentation attacks and deepfakes

We simulate the inputs an attacker might present to a device during an attack.

This includes both low-tech and sophisticated methods: still images, screen-based photos, printed face images, masks, and replayed audio, as well as AI-cloned voice samples and deepfake videos.

Testing against a variety of media shows you whether the system can distinguish real human behaviour from recreated, replayed, or AI-generated samples.

It also helps answer fundamental questions, such as whether a truncated version of a passphrase would allow someone to gain access.

2. Injection attacks and mobile application security

If your biometric process is one part of the security equation, how the application relates to the biometric engine is another.

It is critical to test the controls that the application itself places on the biometric process because hackers will attack them, too.

Before the biometric engine even sees a sample, the application that contains it should enforce strict rules like:

- Accept input only from the real device's camera.

- Accept audio only from the real device's microphone.

- Reject virtual devices.

- Detect tampering in the app's logic.

- Protect biometric data inside the device.

A compromised app can allow a hacker to bypass authentication before biometrics reach the machine-learning layer. If an attacker can tamper with the data on the device, they can replace the user's face or voice with anything they want.

3. Data integrity and feed control

The entire biometric authentication journey needs to be meticulously tested, starting from the mobile application itself through to its communication with external infrastructure, in order to uncover any additional security issues outside the user interaction layer.

For example, attackers might test whether the underlying web/API application is resilient against business validation issues, which may allow it to skip the biometric process altogether. In other cases, business logic issues affecting the app may subvert one of the biometric authentication steps in any way.

Why does this matter? Many systems still accept AI-generated content as real when it is injected directly into the device with no speaker or screen artefacts.

SECFORCE checks whether:

- Biometric payloads can be intercepted.

- Images, audio or other biometric samples can be swapped.

- The application enforces integrity checks on what it sends to the server.

We also test whether scaling attacks with a powerful graphics processing unit (GPU) will allow an attack to bypass your data controls.

SECFORCE can replicate the same level of capability that an advanced threat actor would have. For example, a threat actor could generate thousands of slightly different deepfakes to try and “brute-force” a biometric authentication system. We can test for these eventualities.

SECFORCE Helps You Test Biometric Authentication and Prevent Biometric Attacks (Including Deepfakes)

Security testing allows you to put controls in place that make biometric attacks, including deepfakes, far less likely to bypass your defences.

Biometric attacks succeed because they fool systems. You can harden your system to stop fraudulent attempts from being scored like real ones and minimise the risk of these attacks slipping through.

SECFORCE has the technology to replicate both current and emerging threats facing biometric systems, including advanced deepfake attacks.

Contact us for a free scoping evaluation.